Sometimes, even as a tech reporter, you might be surprised at how quickly technology is evolving. Case in point: I only learned today that my iPhone offers a feature I’ve long wanted – the ability to select plants and flowers from just a photo.

It is true that many third-party apps have offered this functionality for years, but the last time I tried it I was disappointed with its speed and accuracy. And yes, there is Google Lens and snapchat scanbut it’s not always convenient to open an app that I wouldn’t otherwise use.

But, since the introduction of iOS 15 last September, Apple has introduced its own version of this visual search feature. it’s called visual searchwhich is very good.

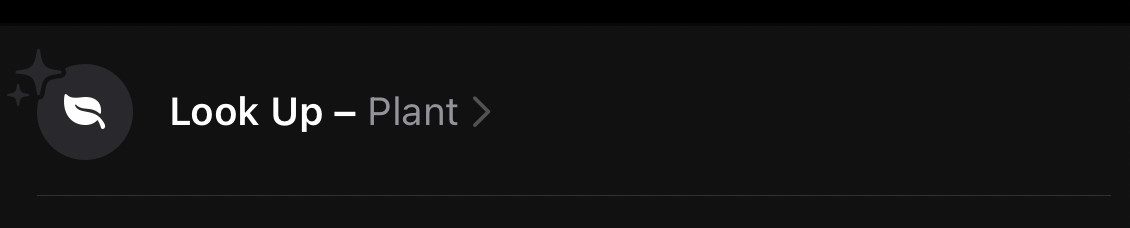

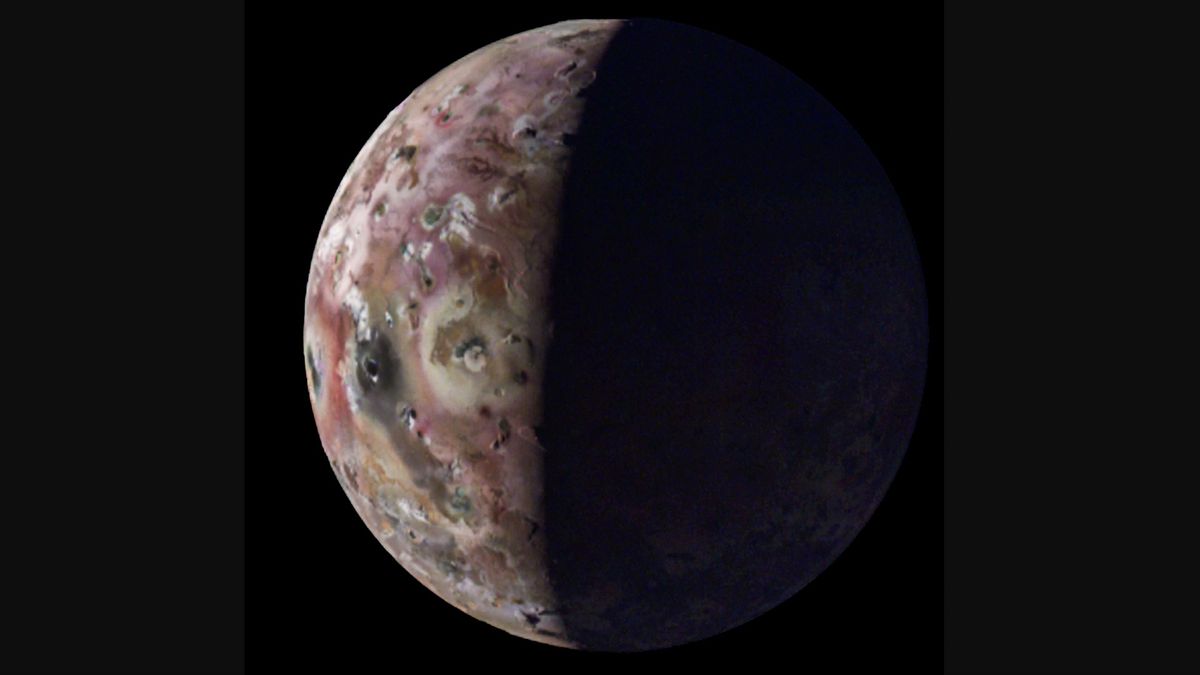

It simply works. Simply open a photo or screenshot in the Photos app and look for the blue “i” icon below it. If it has a little shiny ring around it, iOS has found something in the photo that it can identify using machine learning. Click the icon, then click Search and it will try to find some useful information.

It works not only with plants and flowers, but also for landmarks, arts, pets, and “other things”. It’s not perfect of course, but it has surprised me more times than it has disappointed me. Here are some examples from my camera roll:

Although Apple announced this feature last year at WWDC, it hasn’t announced its exact availability. (I spotted it via a link in one of my favorite tech newsletters, excessive.) Even the official Visual Look Up support page offers mixed messages, telling you in one place it’s “US only” and then listing other compatible regions in different page.

visual search he is It is still limited in its availability, but access has been expanded since launch. It is now available in English in the US, Australia, Canada, UK, Singapore and Indonesia; in French in France; in Germany in Germany; in Italian in Italy; And in Spanish in Spain, Mexico and the United States.

It’s a nice feature, but it also made me wonder what else visual search can do. Imagine taking a picture of your new houseplant, for example, only to have Siri ask “Do you want me to set up reminders for a watering schedule?” Or, if you take a picture of a teacher on vacation, have Siri search the web to find opening hours and where to buy tickets.

I learned a long time ago that It’s foolish to pin your hopes on Siri Doing anything too advanced. But these are the kinds of features we might eventually get with future AR or VR headsets. Let’s hope if Apple offers this kind of functionality, it makes an even bigger splash.

“Hipster-friendly explorer. Award-winning coffee fanatic. Analyst. Problem solver. Troublemaker.”

More Stories

Sony shuts down LittleBigPlanet 3 and Nuking Fan Creations servers

Google's HD Chromecast is just $20

The new 12.9-inch iPad Air could make you think twice about the 2024 iPad Pro