Rights Defender, Claire Hodden, calls for a better oversight of these devices in a statement.

Facing the development of biometric technologies in our society, the rights defender is cautious in a statement released on Tuesday.Substantial risksThat they are.

Also read:No more badge: Biometric access control installed on company doors

«Possible improvements by biometric technologies will not be detrimental to a section of the population, or at the expense of general monitoring.», Warns of independent power led by Claire Hayden. It calls for better oversight of these devices.

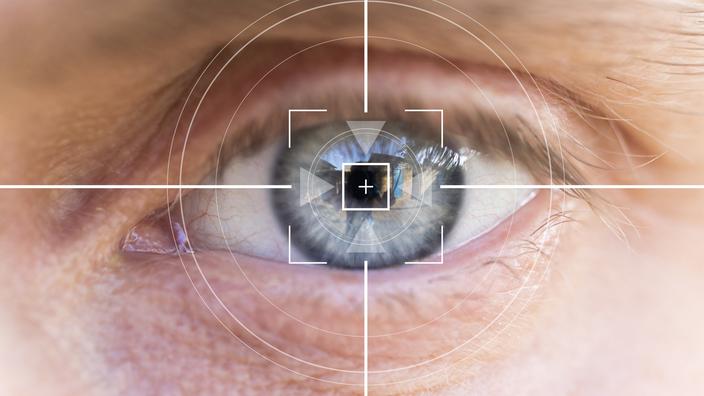

Carry out a transaction using their fingerprints, automatically identify a suspect in a meeting, targeted advertising addressed to someone based on their physical appearance … For many years, these technologies have been used to recognize or identify a person or even to assess his or her personality, facial features, voice or voice. Behavioral characteristics are being disseminated by analyzing his biometric data.

But they are “Especially intrusive“And present”Significant risk of violation of fundamental rights, According to the report. They first “Security breach with particularly serious consequences2, explains the Defender of Rights. If the password can be changed after a hack, the fingerprints cannot be changed when they are stolen from a database. What to put “Danger to anonymity in public by allowing a form of public surveillance», According to the company.

See also – Biometric Key Card: A counter production rejection (12/11/2020)

Beyond simple privacy

These issues go beyond the sole protection of privacy and the blind spots of the Public Data Protection Regulation (GDPR) imposed by Europe from 2018 onwards. The report states:Unmatched ability to amplify and automate discriminationBiometric technologies.

Because their development is closely linked to learning methods, they are based. They may include a number of discriminatory biases, which have already been outlined in a joint statement by the Defender of Rights and the National Commission for Information and Freedom (CINIL) in 2020.

The document, released on Tuesday, recalls the weaknesses of artificial intelligence, the ability of humans to send their stereotypes to them, unknowingly creating biased databases. “In the United States, three blacks have already been unjustly imprisoned for errors in facial recognition.2, recalls the statement.

Finally, recruitment companies already market software that gives candidates scores during a job interview. Although these technologies are aimed at assessing emotions, it is “Make many mistakesAnd violation of labor rights. “Inhibitory effectBiometric Technologies: Can everyone demonstrate generously if facial recognition drones fly in processions?

Control them like drugs

For better oversight, the Rights Defender makes a number of recommendations. First, it calls on all private and public actors to reject emotional evaluation techniques.

In terms of police and justice, the company believes it. “The use of biometric identification does not constitute any offense“According to him, the face recognition already banned by the Constitutional Council for police drones should also be for video surveillance or pedestrian cameras.

Finally, the rights defender “Review the restrictions“In these technologies, especially by imposing one”External and independent audit“By continuously monitoring the effects of biometric identification devices, and algorithms,”In the model controlling adverse drug reactions.

“Tv expert. Writer. Extreme gamer. Subtly charming web specialist. Student. Evil coffee buff.”

More Stories

“Mayo” Zampada, Mexican Godfather Arrested After Forty Years – Freed

The Russian vessel is suspected of violating Finnish territorial waters

Eighteen people died in the plane crash