Bing Edwards / Ars Technica

Last week, Swiss software engineer Matthias Pullman Discover That famous photomontage model stable spread It can compress existing bitmaps with fewer visual artifacts than JPEG or WebP at high compression ratios, although there are significant caveats.

Stable spread is a file Artificial intelligence photomontage model which typically generate images based on text descriptions (called “claims”). The AI model learned this ability by studying millions of images taken from the Internet. During the training process, the model makes statistical associations between images and related words, making a much smaller representation of basic information about each image and storing them as “weights,” which are mathematical values that represent what the AI image model knows, so they occur.

When stable diffusion analyzes and “compresses” the images into a weight form, they reside in what researchers call a “latent space,” a way of saying it exists as a kind of blurry potential that can be perceived in the images once they are decoded. With Stable Diffusion 1.4, the weights file is about 4GB, but it’s knowledge of hundreds of millions of images.

While most people use Stable Diffusion with text prompts, Bühlmann clipped the text encoder and instead forced his images through the Stable Diffusion image encoding process, which takes a low-resolution 512×512 image and converts it to a higher resolution 64×64 latent representation of the space. At this point, the image exists with a much smaller data size than the original image, but it can still be expanded (decoded) to a 512×512 image with fairly good results.

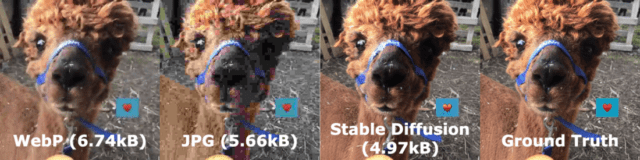

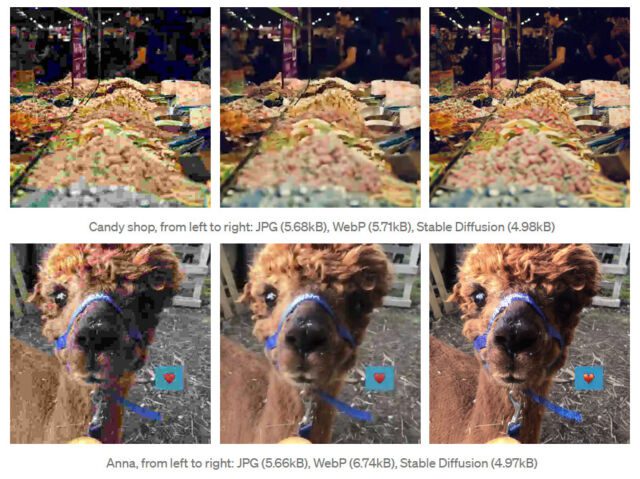

While running tests, Bühlmann found that images compressed with Stable Diffusion look subjectively better at higher compression ratios (smaller file size) than JPEG or WebP. In one example, it shows an image of a candy store compressed to 5.68 KB using JPEG, 5.71 KB using WebP, and 4.98 KB using Stable Diffusion. The stable diffusion image appears to have finer details and less clear compression results than those compressed in other formats.

Bühlmann’s method currently comes with significant limitations, however: it is not good with faces or text and, in some cases, can actually hallucinate detailed features in the decoded image that were not present in the source image. (You probably don’t want the image compressor to invent details in an image that doesn’t exist.) Also, file decoding requires 4GB of stable propagation weights and additional decoding time.

While this use of Stable Diffusion is unconventional and is more of a fun hack than a practical solution, it may indicate a new, future use of photo montage models. Could be a Pullman symbol found on Google Colab, You will find more technical details about his experience in Posted as AI.

“Hipster-friendly explorer. Award-winning coffee fanatic. Analyst. Problem solver. Troublemaker.”

More Stories

Google confirms that Wear OS 5 and Android TV updates are coming

Fallout 4 Next Gen Update release date: When will it arrive?

Steam closes refund policy loophole, finally comes up with a name for the thing where you can play a game early if you pre-order